|

Since the emulated Z80 runs a lot faster than a real Z80, the instructions for the AY chip will be delivered in short bursts every frame (at 50Hz). Because of this, the generated audio signal will not be timed exactly right. Normally, this won't be a problem, considering that the error will be less than 0.02 seconds. However, when the program uses precise timing to generate digital sound, it will not work.

One way to generate digital sound on an AY chip is to set both the tone and noise signals off in the mix. This will result in a permanent high output, which can then be modulated with the volume control to produce a waveform. This technique is used in this demo: b2gemba.tap. To handle this I implemented a class which worked in the same way as the beeper, using the nAudio BufferedWaveProvider. Activated by setting both tone and noise off in the mix, whenever the volume changed, I would feed the buffer with a number of samples corresponding to the number of T-states that had passed since the previous volume change, and with the previous amplitude. The nAudio player would then play back the samples at the correct rate. This worked in principle, but the sound quality was not great. I also found that some programs use another technique which my solution did not support. One such game is Parsec (an excellent game by the way) which modulates the volume of a high pitch signal (pitch value 0) to generate some speech synthesis. To handle this I had to synchronize the regular AY signal with the Z80. After some failed experimenting with extending the buffered wave provider to handle all aspects of the AY signal I choose instead to implement an instruction queue of sorts in my existing solution. Instead of processing instructions sent to the AY directly, the instructions are now placed in a queue, together with the sample number within the frame where the instruction should be processed (the sample number corresponding to the T-state at which the instruction was received). I then added a routine in the signal generator Read-method to pull instructions from the queue at the correct point in time with regard to the current sample number within the frame being processed. This solution now also handles the case where a constant high signal is modulated.

0 Comments

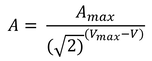

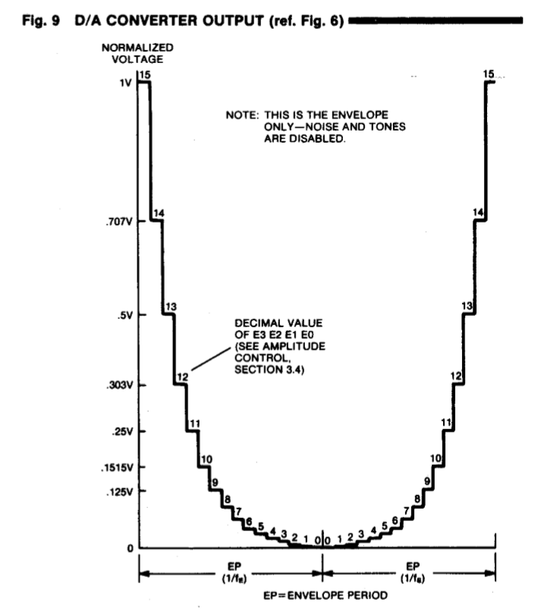

Recently, the game developer Alessandro Grussu discovered that the soundtrack of his new game Sophia II sounded strange on my emulator - specifically that something was wrong with the mix. He kindly provided me with his original PT3 file, which I could open in Vortex Tracker to analyze. After some head scratching and failed remedies (patiently tested by Alessandro), I finally understood that the problem was related to how my emulator translated the AY volume setting to an audio signal amplitude. The AY signal, which is generated by the AYSignalGenerator class, can have an amplitude between 0 and 1. Since the AY signal is played back by the same audio provider as the beeper, the AY signal is limited to a maximum amplitude of 0.1 to achieve balance between the two audio sources. So basically the AY volume setting 0 to 15 needs to be translated to a signal amplitude of 0 to 0.1. I had implemented this in a linear way, so that each AY volume step increased the signal amplitude by 0.00667 (0.1/15). This actually worked out quite well, at least I thought so (maybe because it never crossed my mind that it would be otherwise). However, the Sophia II soundtrack is very dynamic, and with my linear model the dynamics were largely lost. I needed to increase the perceived difference between low and high volume levels, by increasing the signal amplitude exponentially with higher AY volume levels. The solution, based purely on trial and error, was to relate the signal amplitude to the AY volume setting raised to the power of 3 like this: where: A is the signal amplitude (max value = 0.1) V is the AY volume setting (max value = 15) I'm not certain that the above relation is 100% correct, but the result sounds good to me. Update 2019-04-15: I found the correct function for the amplitude on the CPC Wiki, here. I also found this diagram in a manual for the AY-8910, which matches the function well: Update 2020-08-22:

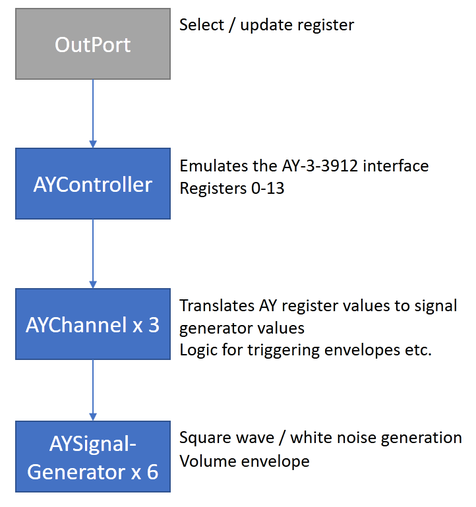

I have replaced the above function with the actual, measured amplitude values reported here. Finally, a big step towards emulating the Spectrum 128 is finished. In a way it was easier than emulating the beeper, since the AY emulation can run on it's own in parallel with the CPU, whereas the beeper needs to be synced precisely with the processing time of each instruction. The AY emulation consists of three classes:

The figure below illustrates how the components interact. As with the beeper, the NAudio library was used for emulating the AY chip. The audio output is handled by the AYController class, via WasapiOut. A mixer is used to handle input from the three AYChannel objects. For generating the square wave and white noise I initially used the SignalGenerator class included in the NAudio library. I then handled envelopes in the AYChannel class, where I used a timer to adjust the signal volume according to the selected envelope pattern. However, I realized that the envelopes can be very fast (kHz), which would require a precision that would be impossible with a timer. I therefore replaced the SignalGenerator with my own (well, to some extent anyway) class where I included envelopes integrated with the actual signal generation, which worked very well. I also had to modify the white noise algorithm to take into account the possibility to set the frequency of the white noise, which was not possible in the original SignalGenerator class. |

Archives

November 2020

Categories

All

|

RSS Feed

RSS Feed